MERONYM LABS

We develop and evaluate AI systems for safety-critical health and public health domains

At Meronym Labs, our research focuses on public health, health systems, and healthcare settings. Our work spans building new AI models for health-related challenges and studying how deployed systems behave and fail in safety-critical contexts. We take an integrated approach in which AI safety, model development, and real-world deployment in health and public health are studied together, creating a feedback loop between methods, models, and impact.

Research areas

AI Safety in Health Systems

Our research develops technical methods and evaluation frameworks to identify and mitigate high-risk behaviors in AI systems for health and health systems, including jailbreaks, harmful outputs, and unsafe system behavior. We study robustness and generalization under distribution shift, develop stress-testing and adversarial evaluation approaches, and design post-deployment monitoring methods to detect drift, misuse, and emergent unsafe behavior in real-world settings.

AI for Public Health

We use computational methods to study how social determinants of health shape health outcomes and examines how these phenomena are expressed in large-scale public health and social data. Our computational approaches characterize population-level risk for suicide and related outcomes, and examine how health-relevant signals are distributed, amplified, or obscured across communities. We translate these analyses into actionable evidence for policymakers, clinicians, and other non-technical stakeholders.

AI Model Development for Health

We develop AI models for clinical and health system applications using structured and unstructured health data. This work focuses on outcome prediction, clinical trajectory modeling, and related decision-support problems. We design training objectives and regimes that account for health system constraints such as missing or noisy data, temporal irregularity, and systematic effects of clinical documentation and care processes.

Our Team

Annika Marie Schoene, PhD | Director and Principles Investigator

Annika Marie Schoene is an Assistant Professor in the Department of Public Health and Health Sciences at Northeastern University. Her research focuses on the safety, robustness, and evaluation of large-scale AI systems, with particular emphasis on applications in healthcare. Dr. Schoene develops technical methods and evaluation frameworks to study how AI systems behave and fail in real-world settings, including identifying high-risk behaviors such as jailbreaks, harmful outputs, and unsafe system behavior.

Current Member

Gautham Vijay Kumar

PhD student in Computer Science

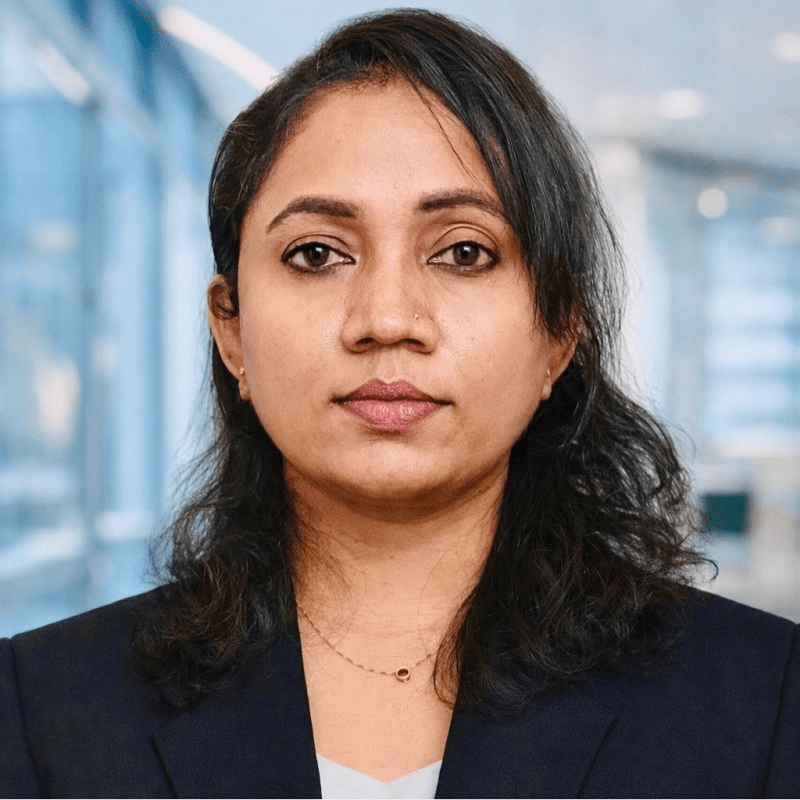

Resmi Ramachandranpillai, PhD

Research Scientist

Laura Haaber Ihle, PhD

AI Ethicist

Former Members

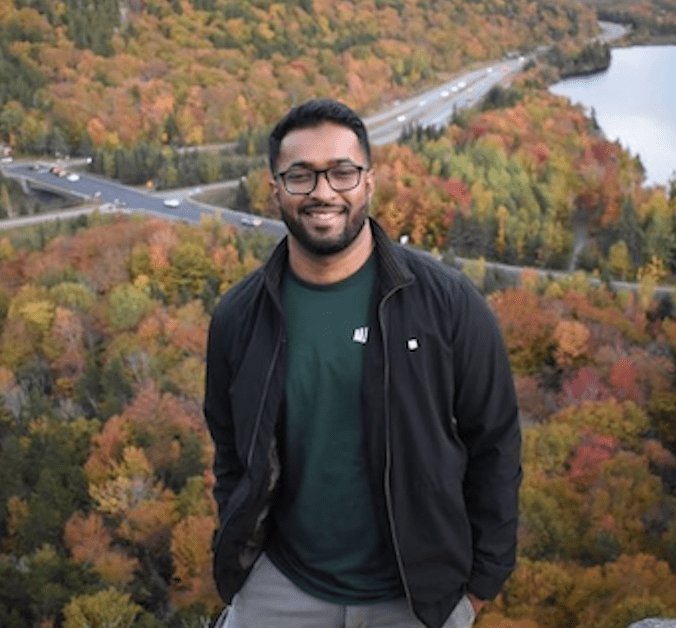

Anson Antony

Research Assistant

MS in Computer Science

Benjamin Irving

Research Assistant

B.S. in Computer Science

Sia Shah

IHESJR Intern

B.S. in Health Science

Janelle Lardizabal

IHESJR Intern

BS in Behavioral Neuroscience

Iman Ibrahim

IHESJR Intern

BA in Public Health (minor in Data Science)